There’s way too much fear mongering in America, which helps to drive the paranoid nature of U.S. foreign and domestic policy. This is the subject of my latest article at TomDispatch.com, which I’ve included below in its entirety. If you don’t read TomDispatch, I urge you to subscribe (top right corner on the home page). Tom Engelhardt has been running the site for 20 years (I’ve been writing for it for 15 of them), and I’ve found the content to be stimulating and thought-provoking. Many thanks for your continued interest in “Bracing Views” as well, which, I joke to Tom, is a little like a baby TomDispatch.

Dystopia, Not Democracy

I have a brother with chronic schizophrenia. He had his first severe catatonic episode when he was 16 years old and I was 10. Later, he suffered from auditory hallucinations and heard voices saying nasty things to him. I remember my father reassuring him that the voices weren’t real and asking him whether he could ignore them. Sadly, it’s not that simple.

That conversation between my father and brother has been on my mind, as I’ve been experiencing America’s increasingly divided, almost schizoid, version of social discourse. It’s as if this country were suffering from some set of collective auditory hallucinations whose lead feature was nastiness.

Take cover! We’re being threatened by a revived red(dish) menace from a “rogue” Russia! A “Yellow peril” from China! Iran with a nuke! And then there are the alleged threats at home. “Groomers”! MAGA kooks! And on and on.

Of course, America continues to face actual threats to its security and domestic tranquility. Here at home that would include regular mass shootings; controversial decisions by an openly partisan Supreme Court; the Capitol riot that the House January 6th select committee has repeatedly reminded us about; and growing uncertainty when it comes to what, if anything, still unifies these once United States. All this has Americans increasingly vexed and stressed.

Meanwhile, internationally, wars and rumors of war continue to be a constant plague, made worse by the exaggeration of threats to national security. History teaches us that such threats have sometimes not just been inflated but created ex nihilo. Those would, for instance, include the non-existent Gulf of Tonkin attack cited as the justification for a major military escalation of the war in Vietnam in 1965 or those non-existent weapons of mass destruction in Iraq used to justify the 2003 U.S. invasion of that country.

All this and more is combining to create a paranoid and increasingly violent country, an America deeply fearful and perpetually thinking about warring on other peoples as well as on itself.

My brother’s doctors treated him as best they could with various drugs and electroshock therapy. Crude as that treatment regimen was then (and remains today), it did help him cope. But what if his doctors, instead of trying to reduce his symptoms, had conspired to amplify them? Indeed, what if they had told him that he should listen to those voices and so aggravate his fears? What if they had advised him that sanity meant arming himself against those very voices? Wouldn’t we, then or now, have said that they were guilty of the worst form of medical malpractice?

And isn’t that, by analogy, true of America’s leaders in these years, as they’ve driven this society to be ever less trusting and more fearful in the name of protecting and advancing their wealth, power, and security?

Fear Is the Mind-Killer

If you’re plugged into the mental matrix that’s America in 2022, you’re constantly exposed to fear. Fear, as Frank Herbert wrote in Dune, is the mind-killer. The voices around us encourage it. Fear your MAGA-hat-wearing neighbor with his steroidal truck and his sizeable collection of guns as he supposedly plots a coup against America. Alternately, fear your “libtard” neighbor with her rainbow peace flag as she allegedly plots to confiscate your guns and brainwash your kids. Small wonder that more than 37 million Americans take antidepressants, roughly one in nine of us, or that, in 2016, this country accounted for 80% of the global market for opioid prescriptions.

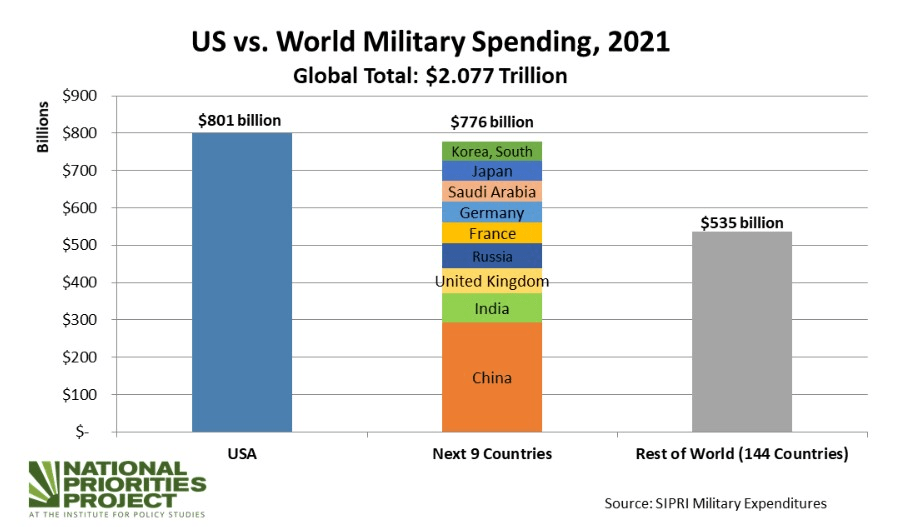

A climate of fear has led to 43 million new guns being purchased by Americans in 2020 and 2021 in a land singularly awash in more than 400 million firearms, including more than 20 million assault rifles. A climate of fear has led to police forces being heavily militarized and fully funded rather than “defunded” (which actually would mean a bit less money going to the police and a bit more to non-violent options like counseling and mental-health services). A climate of fear has led Democrats and Republicans in the House of Representatives who can agree on little else to vote almost unanimously to fork over $840 billion to the Pentagon in Fiscal Year 2023 for yet more wars and murderous weaponry. (Of course, the true budget for what is still coyly called “national defense” will soar well above a trillion dollars then, as it often has since 9/11/2001 and the announcement of a “global war on terror.”)

The idea that enemies are everywhere is, of course, useful if you’re seeking to create a heavily armed and militarized form of insanity.

It’s summer and these days it just couldn’t be hotter, so perhaps you’ll allow me to riff briefly about a scene I’ve never forgotten from The Big Red One, a war film I saw in 1980. It involved a World War II firefight between American and German troops in a Belgian insane asylum during which one of the mental patients picks up a submachine gun and starts blasting away, shouting, “I am one of you. I am sane!” In 2022, sign him up and give him a battlefield commission.

Where fear is omnipresent and violence becomes routinized and normalized, what you end up with is dystopia, not democracy.

We Must Not Be Friends but Enemies

At this point, consider us to be in a distinctly upside-down world. Reverse Abraham Lincoln’s moving plea to Southern secessionists in his first inaugural address in 1861 — “We must not be enemies but friends. We must not be enemies” — and you’ve summed up all too well our domestic and foreign policy today. No, we’re neither in a civil war nor a world war yet, but America’s national (in)security state does continue to insist that virtually every rival to our imperial being must be transformed into an enemy, whether it’s Russia, China, or much of the Middle East. Enemies are everywhere and must be feared, or so we’re repetitiously told anyway.

I remember well the time in 1991-1992 when the Soviet Union collapsed and America emerged as the sole victorious superpower of the Cold War. I was a captain then, teaching history at the U.S. Air Force Academy. Those were also the years when, even without the Soviet Union, the militarization of this society somehow never seemed to end. Not long after, in launching a conflict against Saddam Hussein’s Iraq, this country officially kicked ass in the Middle East and President George H.W. Bush assured Americans that, by going to war again, we had also kicked our “Vietnam Syndrome” once and for all. Little did we guess then that two deeply destructive and wasteful quagmire wars, entirely unnecessary for our national defense, awaited us in Afghanistan and Iraq in the century to come.

Never has a country squandered victory — and a genuinely global victory at that! — so completely as ours has over the last 30 years. And yet there are few in power who consider altering the fearful course we’re still on.

A significant culprit here is the military-industrial-congressional complex that President Dwight D. Eisenhower warned Americans about in his farewell address in 1961. But there’s more to it than that. The United States has, it seems, always reveled in violence, possibly as an antidote to being consumed by fear. Yet the intensity of both violence and fear seems to be soaring. Yes, our leaders clearly exaggerated the Soviet threat during the Cold War, but at least there was indeed a threat. Vladimir Putin’s Russia isn’t close to being in the same league, yet they’ve treated his war with Ukraine as if it were an attack on California or Texas. (That and the Pentagon budget may be the only things the two parties can mostly agree on.)

Recall that, after the collapse of the Soviet Union, Russia was in horrible shape, a toothless, clawless bear, suffering in its cage. Instead of trying to help, our leaders decided to mistreat it further. To shrink its cage by expanding NATO. To torment it through various forms of economic exploitation and financial appropriation. “Russia Is Finished” declared the cover article of the Atlantic Monthly in May 2001, and no one in America seemed faintly concerned. Mercy and compassion were in short supply as all seemed right with the “sole superpower” of Planet Earth.

Now the Russian Bear is back — more menacing than ever, we’re told. Marked as “finished” two decades ago, that country is supposedly on the march again, not just in its invasion of Ukraine but in President Vladimir Putin’s alleged quest for a new Russian empire. Instead of Peter the Great, we now have Putin the Great glowering at Europe — unless, that is, America stands firm and fights bravely to the last Ukrainian.

Add to that ever-fiercer warnings about a resurgent China that echo the racist “Yellow Peril” tropes of more than a century ago. Why, for example, must President Joe Biden speak of China as a competitor and threat rather than as a trade partner and potential ally? Even anti-communist zealot Richard Nixon went to China during his presidency and made nice with Chairman Mao, if only to complicate matters for the Soviet Union.

If imperial America were willing to share the world on roughly equal terms, Russia and China could be “near-peer” friends instead of, in the Pentagon phrase of the moment, “near-peer adversaries.” Perhaps they could even be allies of a kind, rather than rivals always on the cusp of what might potentially become a world-ending war. But the voices that seek access to our heads prefer to whisper sneakily of enemies rather than calmly of potential allies in creating a better planet.

And yet, guess what, whether anyone in Washington admits it or not: we’re already rather friendly with (as well as heavily dependent on) China. Here are just two recent examples from my own mundane life. I ordered a fan — it’s hot as I type these words in my decidedly unairconditioned office — from AAFES, a department store of sorts that serves members of the military, in service or retired, and their families. It came a few days later at an affordable price. As I put it together, I saw the label: “Made in China.” Thank you for the cooling breeze, Xi Jinping!

Then I decided to order a Henley shirt from Jockey, a name with a thoroughly American pedigree. You guessed it! That shirt was plainly marked “Made in China.” (Jockey, to its credit, does have a “Made in America” collection and I got two white cotton t-shirts from it.) You get my point: the American consumer would be lost without China, the present workhouse for the world.

You’d think a war, or even a new Cold War, with America’s number-one provider of stuff of every sort would be dumb, but no one is going to lose any bets by underestimating how dumb Americans can be. Otherwise, how can you explain Donald Trump? And not just his presidency either. What about his “Trump steaks,” “Trump university,” even “Trump vodka”? After all, who could be relied upon to know more about the quality of vodka than a man who refuses to drink it?

Learning from Charlie Brown

Returning to fears and psychiatric help, one of my favorite scenes is from “A Charlie Brown Christmas.” In that classic 1965 cartoon holiday special, Lucy ostensibly tries to help Charlie with his seasonal depression by labeling what ails him. The wannabe shrink goes through a short list of phobias until she lands on “pantophobia,” which she defines as “the fear of everything.” Charlie Brown shouts, “That’s it!”

Deep down, he knows perfectly well that he isn’t afraid of everything. What he doesn’t know, however, and what that cartoon is eager to show us, is how he can snap out of his mental funk. All that he needs is a little love, a little hands-on kindness from the other children.

America writ large today is, to my mind, a little like Charlie Brown — down in the dumps, bedraggled, having lost a clear sense of what life in our country should be all about. We need to come together and share a measure of compassion and love. Except our Lucys aren’t trying to lend a hand at the “psychiatric help” stand. They’re trying to persuade us that pantophobia, the fear of everything, is normal, even laudable. Their voices keep telling us to fear — and fear some more.

It’s not easy, America, to tune those voices out. My brother could tell you that. At times, he needed an asylum to escape them. What he needed most, though, was love or at least some good will and understanding from his fellow humans. What he didn’t need was more fear and neither do we. We — most of us anyway — still believe ourselves to be the “sane” ones. So why do we continue to tolerate leaders, institutions, and whole political parties intent on eroding our sanity and exploiting our fears in service of their own power and perks?

Remember that mental patient in The Big Red One, who picks up a gun and starts blasting people while crying that he’s “sane”? We’ll know we’re on the path to sanity when we finally master our fear, put down our guns, and stop eternally preparing to blast people at home and abroad.

Copyright 2022 William J. Astore